Robotics

News

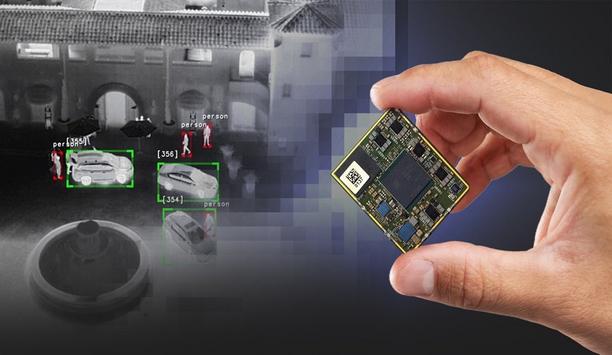

Ambarella, Inc., an edge AI semiconductor company, announced during the ISC West security expo, the continued expansion of its AI system-on-chip (SoC) portfolio with the 5nm CV75S family. These new SoCs provide the industry’s most power- and cost-efficient option for running the latest multi-modal vision-language models (VLMs) and vision-transformer networks. This efficiency makes these cutting-edge AI technologies feasible for a broad range of cost- and power-constrained devices within security cameras for enterprises, smart cities and retail; industrial robotics and access control; and a host of AI-enabled consumer video devices, such as sports and conferencing cameras. Integrating latest technology “With the CV75S family, we are enabling mass-market product designers with the ability to integrate the latest vision-transformer technologies, including VLMs that allow zero shot image classification and multi-modal inferencing for real-time visual analytics without the need for training,” said Chris Day, VP of marketing and business development at Ambarella. “We’re also bringing our advanced AI-based image processing technology to cameras with a wide range of price points, offering significantly greater image quality for a broad spectrum of applications.” Utilising the CV75S This is Ambarella’s first mass-market SoC family to integrate its latest CVflow® 3.0 AI engine A typical example of how the CV75S will be used to run VLMs in enterprise cameras is a natural-language search that is processed within the camera to look for any object or scene among the content it has captured. A multi-modal VLM, such as the contrastive language–image pre-training (CLIP) model, can scour the footage and provide instantaneous results without being trained on that specific object or context. This opens a whole new range of AI capabilities for enterprise cameras, which can now run AI tasks tailored to their installation and user needs without retraining and deploying new AI models for each task. Additional integration This is Ambarella’s first mass-market SoC family to integrate its latest CVflow® 3.0 AI engine, which provides 3x the performance over the prior generation with support for VLMs and vision transformers, as well as advanced AI-based image processing. Additionally, the CV75S integrates the latest generation of Ambarella’s industry-leading image signal processor, 4KP30 H.264/5 video encoding, dual Arm® Cortex-A76 1.6GHz cores, and USB 3.2 connectivity. Ambarella’s Cooper™ Developer Platform To accelerate time to market, the CV75S family is supported by Ambarella’s Cooper™ Developer Platform. This recently introduced platform provides comprehensive hardware and software solutions for creating edge AI systems, including powerful, safe, and secure compute and software capabilities. It consists of industrial-grade hardware tools, collectively called Cooper Metal; along with Cooper Foundry, which provides a multi-layer software stack that supports Ambarella’s entire portfolio of AI SoCs. The CV75S is sampling now and will be demonstrated at Ambarella’s invitation-only exhibition at ISC West in Las Vegas this week.

Ambarella, Inc., an edge AI semiconductor company, announced during the ISC West 2024 security expo, the continued expansion of its AI system-on-chip (SoC) portfolio with the 5nm CV75S family. Power- and cost-efficient option These new SoCs provide the industry’s most power- and cost-efficient option for running the latest multi-modal vision-language models (VLMs) and vision-transformer networks. This efficiency makes these cutting-edge AI technologies feasible for a broad range of cost- and power-constrained devices within security cameras for enterprises, smart cities, and retail; industrial robotics and access control; and a host of AI-enabled consumer video devices, such as sports and conferencing cameras. CV75S family “With the CV75S family, we are enabling mass-market product designers with the ability to integrate the latest vision-transformer technologies, including VLMs that allow zero-shot image classification and multi-modal inferencing for real-time visual analytics without the need for training,” said Chris Day, VP of Marketing and Business Development at Ambarella. He adds, “We’re also bringing our advanced AI-based image processing technology to cameras with a wide range of price points, offering significantly greater image quality for a broad spectrum of applications.” Multi-modal VLM A typical example of how the CV75S will be used to run VLMs in enterprise cameras is a natural-language search A typical example of how the CV75S will be used to run VLMs in enterprise cameras is a natural-language search that is processed within the camera to look for any object or scene among the content it has captured. A multi-modal VLM, such as the contrastive language–image pre-training (CLIP) model, can scour the footage and provide instantaneous results without being trained on that specific object or context. This opens a whole new range of AI capabilities for enterprise cameras, which can run AI tasks tailored to their installation and user needs without retraining and deploying new AI models for each task. CVflow® 3.0 AI engine This is Ambarella’s first mass-market SoC family to integrate its latest CVflow® 3.0 AI engine, which provides 3x the performance over the prior generation with support for VLMs and vision transformers, as well as advanced AI-based image processing. Additionally, the CV75S integrates the latest generation of Ambarella’s industry-leading image signal processor, 4KP30 H.264/5 video encoding, dual Arm® Cortex-A76 1.6GHz cores, and USB 3.2 connectivity. Cooper™ Developer Platform To accelerate time to market, the CV75S family is supported by Ambarella’s Cooper™ Developer Platform. This recently introduced platform provides comprehensive hardware and software solutions for creating edge AI systems, including powerful, safe, and secure computing and software capabilities. It consists of industrial-grade hardware tools, collectively called Cooper Metal; along with Cooper Foundry, which provides a multi-layer software stack that supports Ambarella’s entire portfolio of AI SoCs. The CV75S is sampling and will be demonstrated at Ambarella’s invitation-only exhibition at ISC West in Las Vegas.

Robotic Assistance Devices, Inc. (RAD), a subsidiary of Artificial Intelligence Technology Solutions, Inc., announced that it has delivered a RADDOG LE to the Taylor, Michigan Police Department. AI-powered, robotic solutions The delivery of RADDOG LE to the Taylor Police Department marks a historic moment in the integration of technology within law enforcement. This milestone underscores RAD’s commitment to revolutionizing the landscape of security and public safety through cutting-edge AI-powered, robotic solutions. Enhancing public safety RADDOG LE, designed and developed by RAD, is a state-of-the-art quadruped robot dog The integration of RADDOG LE by the Taylor Police Department represents a significant advancement in leveraging technology to enhance public safety and streamline operations within the community, supported by a dedicated team of approximately 100 sworn officers. RADDOG LE, designed and developed by RAD, is a state-of-the-art quadruped robot dog modeled to support law enforcement agencies in a variety of operational scenarios. With advanced mobility, surveillance capabilities, and patrolling functions, RADDOG LE is poised to become an invaluable asset to the Taylor Police Department and other law enforcement agencies throughout the U.S. A situational, defensive tool Lt. Jeff Adamisin of the Taylor Police Department commented, “The robotic dog is a situational, defensive tool that gives its user a vision that may not be available without human risk.” Chief of Police John Blair added, “It puts us face-to-face with the person or situation without a risk of harm. It can act almost like Zoom where you can have interaction without other possible problems.” Safer and more technologically advanced future RAD’s Sr. Vice President of Revenue Operations, Troy McCanna, an ex-FBI agent of 23 years, expressed enthusiasm about the new addition, stating, “Together, we embark on a journey towards a safer and more technologically advanced future, where RADDOG stands as a beacon of progress and partnership." Troy McCanna adds, "We sincerely hope that the addition of RADDOG within the Taylor PD will add a layer of safety for their officers, and work to de-escalate dangerous situations.” As of 2020, there were 17,985 police agencies, with more than 800,000 sworn law enforcement officers in the United States. Deployment in real-life situations Steve Reinharz, CEO/CTO of AITX and RAD, highlighted, “The Taylor PD plans to exhaustively place RADDOG in real-world situations and share their findings and suggestions as we work to develop future iterations of RADDOG further." Steve Reinharz adds, "This historic relationship represents a significant milestone in the evolution of law enforcement technology, demonstrating how cooperation between public and private sectors can foster positive change for community safety. I truly foresee a future where a RADDOG accompanies every police cruiser on every call.” RAD’s presentation of RADDOG LE to the Taylor Police Department attracted media attention with several Detroit television stations covering the event.

Matrix, a pioneering security and telecom solutions provider, is thrilled to announce its title sponsorship of 'Footprints 24', the 24th edition of the national technical fest organized by the Faculty of Technology and Engineering (FTE), Maharaja Sayajirao University. Scheduled to take place on 1st, 2nd, and 3rd March 2024, 'Footprints 24' is a national youth event that showcases the technical prowess of budding engineers from MS University. The three-day tech fiesta will feature a series of competitions, workshops, and exhibitions aimed at fostering innovation and technological advancements. Matrix's commitment Matrix seeks to play a crucial role in supporting and encouraging the next generation of engineers Matrix's commitment to excellence aligns seamlessly with the objectives of 'Footprints'24', where students from across the nation will converge to explore, learn, and showcase their technical skills. As the title sponsor, Matrix seeks to play a crucial role in supporting and encouraging the next generation of engineers and innovators. Ganesh Jivani, CEO at Matrix, expressed enthusiasm about the collaboration, stating, "Matrix is proud to be the title sponsor for 'Footprints'24'. We believe in nurturing and empowering young talents who are the future of technology. This event provides an excellent platform for students to demonstrate their skills and creativity, and we are excited to be a part of this journey." Matrix's sponsorship The 'Footprints 24' event will encompass various technical competitions, including robotics, coding challenges, and project exhibitions, providing a comprehensive platform for students to showcase their technical prowess. Matrix's sponsorship reflects its commitment to supporting educational initiatives and fostering innovation within the technology and engineering community. Matrix looks forward to an exciting collaboration with Maharaja Sayajirao University's Faculty of Technology and Engineering and anticipates a successful and inspiring 'Footprints 24' event.

Expert commentary

Global transportation networks are becoming increasingly interconnected, with digital systems playing a crucial role in ensuring the smooth operation of ports and supply chains. However, this reliance on technology can also create vulnerabilities, as demonstrated by the recent ransomware attack on Nagoya Port. As Japan's busiest shipping hub, the port's operations were brought to a standstill for two days, highlighting the potential for significant disruption to national economies and supply chains. Transportation sector The attack began with the port's legacy computer system, which handles shipping containers, being knocked offline. This forced the port to halt the handling of shipping containers that arrived at the terminal, effectively disrupting the flow of goods. The incident was a stark reminder of the risks associated with the convergence of information technology (IT) and operational technology (OT) in ports and other critical infrastructures. This is not an isolated incident, but part of a broader trend of escalating cyber threats targeting critical infrastructure. The transportation sector must respond by bolstering its defenses, enhancing its cyber resilience, and proactively countering these threats. The safety and efficiency of our transportation infrastructure, and by extension our global economy, depend on it. Rising threat to port security and supply chains XIoT, from sensors on shipping containers to automatic cranes, are vital to trendy port functions OT, once isolated from networked systems, is now increasingly interconnected. This integration has expanded the attack surface for threat actors. A single breach in a port's OT systems can cause significant disruption, halting the movement of containers and impacting the flow of goods. This is not a hypothetical scenario, but a reality that has been demonstrated in recent cyberattacks on major ports. Adding another layer of complexity is the extended Internet of Things (XIoT), an umbrella term for all cyber-physical systems. XIoT devices, from sensors on shipping containers to automated cranes, are now integral to modern port operations. These devices are delivering safer, more efficient automated vehicles, facilitating geo-fencing for improved logistics, and providing vehicle health data for predictive maintenance. XIoT ecosystem However, the XIoT ecosystem also presents new cybersecurity risks. Each connected device is a potential entry point for cybercriminals, and the interconnected nature of these devices means that an attack on one, which can move laterally and can have a ripple effect throughout the system. The threat landscape is evolving, with cybercriminals becoming more sophisticated and their attacks more damaging with a business continuity focus. The growing interconnectivity between OT and XIoT in port operations and supply chains is also presenting these threat actors with a greater attack surface. Many older OT systems were never designed to be connected in this way and are unlikely to be equipped to deal with modern cyber threats. Furthermore, the increasing digitization of ports and supply chains has led to a surge in the volume of data being generated and processed. This data, if not properly secured, can be a goldmine for cybercriminals. The potential for data breaches adds another dimension to the cybersecurity challenges facing the transportation sector. Role of Cyber Resilience in Protecting Service Availability Cyber resilience refers to organization's ability to prepare for, respond to, and recover from threats As the threats to port security and supply chains become increasingly complex, the concept of cyber resilience takes on a new level of importance. Cyber resilience refers to an organization's ability to prepare for, respond to, and recover from cyber threats. It goes beyond traditional cybersecurity measures, focusing not just on preventing attacks, but also on minimizing the impact of attacks that do occur and ensuring a quick recovery. In the context of port operations and supply chains, cyber resilience is crucial. The interconnected nature of these systems means that a cyberattack can have far-reaching effects, disrupting operations not just at the targeted port, but also at other ports and throughout the supply chain. A resilient system is one that can withstand such an attack and quickly restore normal operations. Port operations and supply chains The growing reliance on OT and the XIoT in port operations and supply chains presents unique challenges for cyber resilience. OT systems control physical processes and are often critical to safety and service availability. A breach in an OT system can have immediate and potentially catastrophic physical consequences. Similarly, XIoT devices are often embedded in critical infrastructure and can be difficult to patch or update, making them vulnerable to attacks. Building cyber resilience in these systems requires a multi-faceted approach. It involves implementing robust security measures, such as strong access controls and network segmentation, to prevent attacks. It also involves continuous monitoring and detection to identify and respond to threats as they occur. But perhaps most importantly, it involves planning and preparation for the inevitable breaches that will occur, ensuring that when they do, the impact is minimized, and normal operations can be quickly restored. Building resilience across port security and supply chains In the face of cyber threats, the transport sector must adopt a complete method of cybersecurity In the face of escalating cyber threats, the transportation sector must adopt a comprehensive approach to cybersecurity. This involves not just implementing robust security measures, but also fostering a culture of cybersecurity awareness and compliance throughout the organization. A key component of a comprehensive cybersecurity strategy is strong access controls. This involves ensuring that only authorized individuals have access to sensitive data and systems. It also involves implementing multi-factor authentication and regularly reviewing and updating access permissions. Strong access controls can prevent unauthorized access to systems and data, reducing the risk of both internal and external threats. Network segmentation Network segmentation is another crucial measure. By dividing a network into separate segments, organizations can limit the spread of a cyberattack within their network. This can prevent an attack on one part of the network from affecting the entire system. Network segmentation also makes it easier to monitor and control the flow of data within the network, further enhancing security. Regular vulnerability assessments and patch management are also essential. Vulnerability assessments involve identifying and evaluating potential security weaknesses in the system, while patch management involves regularly updating and patching software to fix these vulnerabilities. These measures can help organizations stay ahead of cybercriminals and reduce the risk of exploitation. EU’s NIS2 Directive EU’s NIS2 Directive came into effect, and member states have until October 2024 to put it into law The transportation sector must also be prepared for greater legislative responsibility in the near future. The EU’s NIS2 Directive recently came into effect, and member states have until October 2024 to put it into law. The Directive aims to increase the overall level of cyber preparedness by mandating capabilities such as Computer Security Incident Response Teams (CSIRTs). Transport is among the sectors labeled as essential by the bill, meaning it will face a high level of scrutiny. Getting to grips with the complexities of XIoT and OT integration will be essential for organizations to achieve compliance and avoid fines. Global transportation infrastructure Finally, organizations must prepare for the inevitable breaches that will occur. This involves developing an incident response plan that outlines the steps to be taken in the event of a breach. It also involves regularly testing and updating this plan to ensure its effectiveness. A well-prepared organization can respond quickly and effectively to a breach, minimizing its impact and ensuring a quick recovery. In conclusion, mastering transportation cybersecurity requires a comprehensive, proactive approach. It involves implementing robust technical measures, fostering a culture of cybersecurity awareness, and preparing for the inevitable breaches that will occur. By taking these steps, organizations can enhance their cyber resilience, protect their critical operations, and ensure the security of our global transportation infrastructure.

There’s a new security paradigm emerging across malls, server farms, smart office buildings, and warehouses, and its advantage over the status quo are so broad they are impossible to ignore. Instead of a lecture, let’s start with a short narrative scenario to illustrate my point. Darryl's work Darryl works as a security guard at the Eastwood Mall. Like any typical evening, tonight’s shift begins at 9:30 PM, as the stores close and the crowds thin. His first task: Ensure that by 10 pm, all mall visitors have actually left and that all doors, windows, and docks are locked securely. As he walks through most major areas throughout the mall, he checks them off his list. All’s quiet, so after a 45-minute patrol, he stops for a quick coffee break before heading out again. He repeats the process throughout the night, happy to finish each round’s checklist and rest his feet for a few minutes. Challenge: Vandalism during the shift A few cameras located sporadically throughout the mall recorded two dark figures moving in and out of the shadows As usual, there’s nothing notable to report, he clocks out and heads home. The next morning, however, he's greeted by an angry mall manager. He learns that sometime during his shift, three stores were robbed and a back hallway vandalized. A few closed-circuit cameras located sporadically throughout the mall recorded two dark figures moving in and out of the shadows at about 4 am. The mall manager demands an explanation, and Daryl has none, “They must have been hiding during closing time and then waited for me to pass before acting,” he says. “I can only be in one place at one time. And if they were hiding in a dark hallway, I would never have seen them.” Theft explanation “Actually,” explains the manager, “we found a loading door ajar near the furniture store. We’re guessing that’s how they got in, but we can’t be sure. Do you check all the docks? We need to know if we need to replace a lock. Look at your logs - tell me exactly what you saw and when.” Daryl tries to recall. “I'm pretty sure I checked that one a couple of times. I checked it off my list.” Darrell decides not to mention that at 4:00 AM, he was feeling the night's fatigue and might have skipped that area a couple of times. That's the end of our tale. Poor Daryl is not a bad security guard, but he’s only human. His job is repetitive and unstimulating. Darryl's work log He checks off each location for the record, but there's no way for him to record the thousands of details Let’s discuss his hourly log. He checks off each location for the record, but there's no way for him to record the thousands of details he sees to later zoom in on the few observations that might be helpful for an investigation. He has walked by that loading dock door hundreds of times, and it's all a blur. This isn’t an unusual story; Darryl is doing the same job that humans have been doing in almost precisely the same way for millennia. And, like last night, the criminals have always found a way to avoid them. But there is a better way. Solution: Fully-automated indoor drone Replacing a human guard with a fully-automated indoor drone eliminates virtually all the problems we've identified in this story as it flies through the facility: Drone teams can work 24/7: While each drone needs to dock to recharge its battery periodically, a fleet working in concert can patrol around the clock in multiple areas simultaneously. This makes it much more difficult for an intruder to move freely, without risk of discovery. A drone can even keep an eye out and keep recording while docked. Drones see and log everything: Everything is recorded and stored in full detail as they compare what they see with what they expect to see High-resolution onboard cameras and ultrasensitive sensors can detect heat, movement, and moisture, and see into dark areas much more effectively than the human eye. As they aren't limited to the floor, they can also fly high in the air to look above obstacles and at high windows or warehouse shelves. And they don’t lose focus or get bored as the night drags on: Everything is recorded and stored in full detail as they compare what they see with what they expect to see based on a previous flight. Anything unusual triggers an alert. Drones don’t need vacations, snack/bathroom breaks, or new-recruit training: Without the need to deal with biological requirements, you aren't paying for non-work hours, and there’s no overtime for extra hours or holiday shifts. In a high-turnover business like security, there's no time spent training new employees; adding drones to your fleet simply means installing your existing procedures onto each. There is certainly room for judgment calls that require human intervention, but these can often be handled remotely using a control panel that provides all relevant data and alerts from the drones on duty. That means no scrambling to the office in the middle of the night for a false alarm. Drones outshine stationary cameras and the people staring at those screens: A guard in the security office staring at dozens of these screens usually loses their attention span throughout the shift Close-circuit cameras are expensive to install, maintain, and periodically replace. In addition, they are limited in their scope and, almost by definition, leave large blind spots. A guard in the security office staring at dozens of these screens (that generally show nothing notable) usually loses their attention span throughout the shift. Conclusion In short, there is a good reason that our industry is following close on the heels of the manufacturing industry, which has been eagerly adopting robotics as a more cost-effective and precise solution for years. It is simply becoming harder and harder to justify the expense of the traditionally error-prone and monotonous work that we ask of our security guards.

Although video camera technology has been around since the early 1900s, it was not until the 1980s that video started to gain traction for security and surveillance applications. The pictures generated by these initial black and white tube cameras were grainy at best, with early color cameras providing a wonderful new source of visual data for better identification accuracy. But by today’s standards, these cameras produced images that were about as advanced as crayons and coloring books. Fast forward to 2022, where most security cameras deliver HD performance, with more and more models offering 4K resolution with 8K on the horizon. Advanced processing techniques, with and without the use of infrared illuminators, also provide the ability to capture usable images in total darkness; and mobile devices such as drones, dash cams, body cams, and even cell phones have further expanded the boundaries for video surveillance. Additionally, new cameras feature on-board processing and memory to deliver heightened levels of intelligence at the edge. A new way of doing things But video has evolved beyond the capabilities of advanced imaging and performance to include another level: Artificial Intelligence. Video imaging technology combines with AI, delivers a wealth of new data, not just for traditional physical security applications, but for a much deeper analysis of past, present, and even future events across the enterprise. This is more than a big development for the physical security industry; it is a monumental paradigm shift that is changing how security system models are envisioned, designed, and deployed. Much of the heightened demand for advanced video analytics is being driven by six prevalent industry trends: 1) Purpose-built performance Several video analytics technologies have become somewhat commoditized “intelligent” solutions over the past few years, including basic motion and object detection that can be found embedded in even the most inexpensive video cameras. New, more powerful, and intelligent video analytics solutions deliver much higher levels of video understanding. Vintra custom-built their platform to focus on what matters most to security professionals: speed and accuracy.” This is accomplished using purpose-built deep learning, employing advanced algorithms and training input capable of extracting the relevant data and information of specific events of interest defined by the user. This capability powers the automation of two important workflows: the real-time monitoring of hundreds or thousands of live cameras, and the lightning-fast post-event search of recorded video. Vintra video analytics, for example, accomplishes this with proprietary analytics technology that defines multi-class algorithms for specific subject detection, classification, tracking, and re-identification and correlation of subjects and events captured in fixed or mobile video from live or recorded sources. 2) Increased security with personal privacy protections The demand for increased security and personal privacy are almost contradictory given the need to accurately identify threatening and/or known individuals, whether due to criminal activity or the need to locate missing persons. But there is still societal pushback on the use of facial recognition technology to accomplish such tasks, largely surrounding the gathering and storage of Personally Identifiable Information (PII). The good news is that this can be effectively accomplished with great accuracy without facial recognition, using advanced video analytics that analyze an individual’s whole-body signature based on various visual characteristics rather than a face. This innovative approach provides a fast and highly effective means of locating and identifying individuals without impeding the personal privacy of any individuals captured on live or recorded video. 3) Creation and utilization of computer vision Computer vision-driven video analytics transform professional video security systems from being purely reactive to proactive and pre-emptive solutions.” There are a lot of terminologies used to describe AI-driven video analytics, including machine learning (ML) and deep learning (DL). Machine learning employs algorithms to transform data into mathematical models that a computer can interpret and learn from, and then use to decide or predict. Add the deep learning component, and you effectively expand the machine learning model using artificial neural networks which teach a computer to learn by example. The combination of layering machine learning and deep learning produces what is now defined as computer vision (CV). A subset but more evolved form of machine learning, computer vision is where the work happens with advanced video analytics. It trains computers to interpret and categorize events much the way humans do to derive meaningful insights such as identifying individuals, objects, and behaviors. 4) Increased operational efficiencies Surveillance systems with a dozen or more cameras are manpower-intensive by nature, requiring continuous live or recorded monitoring to detect and investigate potentially harmful or dangerous situations. Intelligent video analytics, which provides real-time detection, analysis, and notification of events to proactively identify abnormalities and potential threats, transform traditional surveillance systems from reactive to proactive sources of actionable intelligence. In addition to helping better protect people, property, and assets, advanced video analytics can increase productivity and proficiency while reducing overhead. With AI-powered video analytics, security and surveillance are powered by 24/7 technology that doesn’t require sleep, taking breaks, or calling in sick. This allows security operations to redeploy human capital where it is most needed such as alarm response or crime deterrence. It also allows security professionals to quickly and easily scale operations in new and growing environments. 5) A return on security investment “With video analytics, what has always been regarded as a cost center is now being looked at as a profit center.” The advent of advanced video analytics is slowly but surely also transforming physical security systems from necessary operational expenses into potential sources of revenue with tangible ROI, or as it is better known in the industry, ROSI – Return on Security Investment. New video analytics provide vast amounts of data for business intelligence across the enterprise. Advanced solutions can do this with extreme cost-efficiency by leveraging an organization’s existing investment in video surveillance systems technology. This easy migration path and a high degree of cost-efficiency are amplified by the ability to selectively apply purpose-built video analytics at specific camera locations for specific applications. Such enterprise-grade software solutions make existing fixed or mobile video security cameras smarter, vastly improving how organizations and governments can automatically detect, monitor, search for and predict events of interest that may impact physical security, health safety, and business operations. For example, slip-and-fall analysis can be used to identify persons down or prevent future incidents, while building/area occupancy data can be used to limit crowds or comply with occupancy and distancing guidelines. In this way, the data gathered is a valuable asset that can deliver cost and safety efficiencies that manual processes cannot. 6) Endless applications The business intelligence applications for advanced video analytics platforms are virtually endless including production and manufacturing, logistics, workforce management, retail merchandising and employee deployment, and more. This also includes mobile applications utilizing dashboard and body-worn cameras, drones, and other forms of robotics for agricultural, oil and gas, transportation, and numerous other outdoor and/or remote applications. An added benefit is the ability to accommodate live video feeds from smartphones and common web browsers, further extending the application versatility of advanced video analytics. Navigating a busy intersection The accelerated rate of development for new advanced video analytics makes the intersection of video and AI technologies a very busy one to navigate. Just like crossing the street, one needs to be cautious in their approach. There are a lot of players entering this space who are making big statements and claims about their solutions. When vetting a provider, consider that it’s all about how they develop their technology, the accuracy they deliver, and their ability to leverage this new source of data to improve the specific outcomes you need to achieve. And most of all, it’s about proof of performance and how they arrived at the desired outcomes. Navigate your way across this busy intersection pragmatically, and you will find intelligent video analytics to be a real game-changer for your organization’s physical security operations.

Security beat

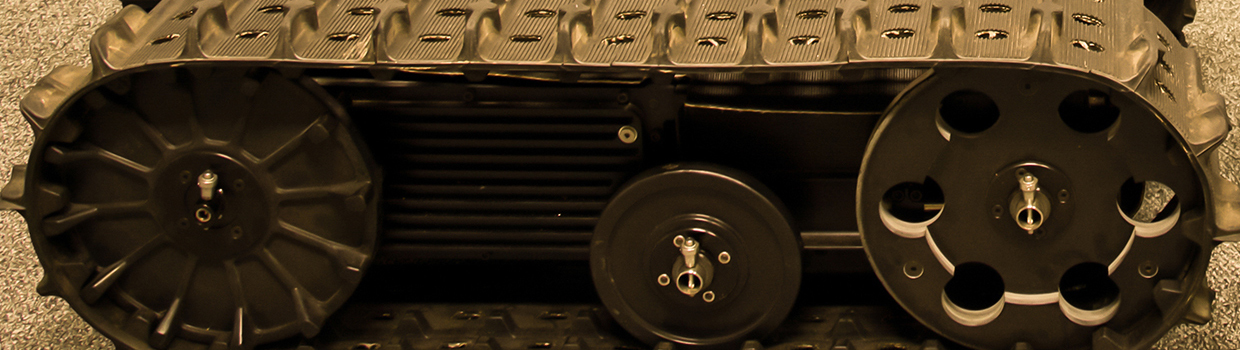

New robots and indoor drones for security applications are on the horizon, based on the work of ADT Commercials’ Innovation Lab, which is evaluating the new technologies’ value to customers and some typical use cases. The Innovation Lab has been in place for just under a year and just moved into a 2,000-square-foot facility that is staffed with four dedicated ADT Commercial employees and teams from various commercial innovation partners. The idea of the lab is to close the gap between the incubation of new technologies and the needs and realities of the ultimate customer. The goal is to adapt the design of a product to meet the customer’s need, instead of forcing the customer to adapt their use of the technology to meet its limitations. Addressing a problem Every project or investment at the innovation lab starts with the identification of the problem, never the solution" "Every project or investment at the innovation lab starts with the identification of the problem, never the solution," says Ed Bacco, Vice President, Enterprise Security Risk Group for ADT Commercial. “Then we develop detailed functional requirements to address what this technology needs to do to address the problem.” “Then – and only then – do we move toward developing the technical specifications to answer how the technology needs to operate to address the problem,” he adds. Prioritizing innovation The approach sets ADT Commercial apart from most labs. The last item they focus on is the creation of a business model to address how they can sell the technology. “Early on, the company recognized that if we truly want to focus on innovation, we need to prioritize invention over profits, which is why the lab doesn’t operate under a profit-and-loss model,” says Bacco. This article will describe two recent technologies the Innovation Lab has been working with, and how they can impact the future of the security industry. Halodi Humanoid Robotics Unlike other competitive robot solutions, Halodi Humanoid Robotics can interact with the built environment The ideal use of humanoid robots is to perform jobs that are considered repetitive, dirty, dangerous, and/or mundane. In security, that describes most security guard positions. Unlike some other competitive robot solutions, Halodi Humanoid Robotics can interact with the built environment, meaning that they can autonomously open doors, call elevators, present security badges, operate pins pads, and more. Observe and report functionality The basic use case for the bot is based on the fact that the role of 98% of all security guards is to “observe and report.” There is often a misconception in the public that guards are widely permitted to engage hands-on with alleged offenders, but most guarding contracts restrict that ability. Therefore, given the mundane and routine nature of guarding, humans find it a challenge to maintain the high degree of awareness that is needed, whereas the bots never waiver, never rest and never lose awareness. Alternative to human guards If a bot is to serve as an alternative to human guards, then it needs to interact with the human environment The bot wasn’t designed to be “human-like.” There is a general concept in humanoid robotics called the “uncanny valley” that defines a tipping point when humans become uncomfortable with humanoid robots if their design resembles humans too closely. However, if a bot is to serve as an alternative to human guards, then it needs to be capable of interacting with an environment that was designed for humans. That means opening doors, riding elevators, bending down, picking up items, etc. Remotely operated Current robotic devices are skilled at sensing/analyzing the environments they are deployed in, but their ability to interact with them is limited. The other advantage of a humanoid robot is when it’s being remotely operated in the avatar mode, meaning virtually, the human operator finds the experience familiar and intuitively knows what to do. Access, intrusion, and VMS integration The bots can be integrated with access control, intrusion, and video management systems and can conduct patrols autonomously and report anomalies, and/or respond to alarms via those same systems. The bots can be equipped with other sensors to constantly analyze the environment for threats to human life such as carbon monoxide, smoke, hazardous chemicals, or poor air quality. IR capabilities IR capabilities also enable the bot to detect the possible failing or overheating of equipment They are capable of “seeing” in the Infrared Range (IR), which makes them suitable for responding to the source of fire/smoke alarms while humans evacuate. IR capabilities also enable the bot to detect the possible failing or overheating of equipment. Although designed to operate autonomously, the bot can also be controlled by a simple point-and-click mapping device or using the avatar control system. Applications “The biggest challenge we’ve seen with customers who are conducting in-field pilots is aligning their excitement of how the bots can improve their traditionally non-security applications with the current capabilities,” says Bacco. These applications include using the bots to conduct safety audits of items like fire extinguishers and Automated External Defibrillators (AEDs), serving as a fire watch, greeting visitors in the lobby, and automating gates at industrial and distribution sites. Autonomous Indoor Drones COVID-19 has shown that customers are looking for predictable and repeatable solutions to meet their security needs" As the name implies, autonomous indoor drones are focused on flying indoors versus the outdoor environment, which is heavily regulated by the U.S. Federal Aviation Administration (FAA). Flying autonomously indoors not only eliminates FAA oversight but also will enable ADT Commercial to offer another choice to customers to further reduce their dependencies on and costs of human guards. “The COVID-19 pandemic has shown that customers are looking for predictable and repeatable solutions to meet their security needs that aren’t dependent on humans,” says Bacco. The three use cases for the drones are automated alarm response, random tours, and directed missions. Automated response mode In the automated response mode, when an alarm is triggered, the drone will automatically initiate a mission to the point of alarm and report any anomalies (i.e., people, heat signature, etc.). It can also be programmed to fly random patrols as a deterrent to a possible breach, and an operator can remotely initiate a mission using a simple point-and-click map interface. Drone mobility An obvious comparison is to fixed camera systems, which are an important component of any security system. But, unlike a fixed device, the mobility of a drone enables a view of an environment that is more easily understood by humans, meaning that we think and see in 3D, whereas fixed views are limited to 2D. Added to that, there is a deterrence factor that a mobile surveillance device has over a fixed. Noise consideration and applications Customers want to fly drones 24/7 in minimally manned locations such as data centers, warehouses The drone is designed to operate in environments that are being shared with humans. As an example, it will not initiate a mission if a person is standing under it, and it will autonomously alter course if a person is in its flight path. However, like all drones, the noise factor is a consideration, and the best applications in offices are after hours. Customers want to fly them 24/7 in more minimally manned locations such as data centers, warehouses, and manufacturing facilities, where noise is less a factor. Stand-alone and integrated system An indoor drone can be operated as a stand-alone system with its user interface, or it can be integrated fully with traditional security and VMS systems. “We are also developing additional computer vision-based analytics that will leverage the cameras on the drone,” says Bacco.

Metaverse is a familiar buzzword today, but few people grasp what it really means. In the simplest terms, the metaverse is an online ‘place’ where physical, virtual and augmented realities are shared. The term, Metaverse, suggests a more immersive online environment that combines elements of augmented reality (AR) and virtual reality (VR). metaverse Expectations of the metaverse today are largely built on hype. You hear more about future and eventual potential than about the current situation. However, the long-range business opportunities are large enough to get the attention of Microsoft, Google, and other big-tech companies, all seeking to carve out a presence and lay claim to future riches in the metaverse. The marketplace aspect of the metaverse will be crucial. How eager are people to pay ‘real-world’ dollars for digital items? Successfully monetizing the metaverse will help to expand its usefulness, as a tool for non-revenue-generating activities. Creation of a complex online environment The looming creation of a complex online environment offers possibilities and challenges For the physical security industry, the looming creation of a complex online environment offers possibilities and challenges. Establishing identity will be a central principle of the metaverse and various biometrics are at the core of ensuring the identity of someone interacting in the virtual world. Cyber security elements are also key. On the benefits side, the security market is already taking advantage of technologies related to the eventual evolution of the metaverse. For example, the industry has deployed AR to provide information about a door lock’s status onto a screen, headset or smart glasses as a patrolling guard walks by. Three-dimensional virtual dashboards Three-dimensional virtual dashboards will revolutionize user interfaces, by providing information in real-time, superimposed onto video of a live scene. Think of it as a PSIM you wear on your head. Anything that needs immediate attention can dominate the virtual screen. Virtual reality has also found its way into various training scenarios, including security. “We now have tools at our fingertips to support security in a physical building, including hardware, software applications, and even smart phones,” said Rob Martens, Allegion’s Chief Innovation and Design Officer, adding “The metaverse will become an aggregation point to make it simpler to pull more tools together seamlessly.” Information aggregated into a ‘virtual workspace’ Today, rather than viewing security data on a laptop, security operators can see all the information aggregated into a ‘virtual workspace’. The heightened level of automation comes with more tools to simplify how operators interact with the data, which is combined into a seamless, customized augmented reality interface that facilitates easy control of the physical environment, using tools in the virtual world. Elements of the metaverse will enable the physical security industry, among many others, to deal with employee labor shortages. The term ‘virtual workspaces’ can describe the various technology ‘hacks’ that have been developed during the COVID-19 pandemic. New tools that aid in remote working In addition to Zoom calls, companies have developed new tools that employees can use to work remotely In addition to the familiar Zoom calls, companies have developed new tools that employees can use to work remotely. Elements of the metaverse will promote evolution of these tools into a seamless, responsive, real-time system that broadly encompasses more and more of an employee’s work experience into virtual form. The trend towards working remotely and the development of tools to enable the new trend were accelerated during the COVID-19 pandemic. As these tools evolve, more employees will be able to work remotely, using the same tools that are driving the metaverse. Development of more virtual workplace tools A critical shortage of workers in many industries will also drive development of more virtual workplace tools. Performance of even traditionally ‘hands-on’ jobs will evolve to include remote capabilities with the help of virtual tools. For example, imagine a warehouse worker who completes his tasks remotely from a computer screen or virtual reality headset that controls and directs a robot to do the heavy lifting in a factory that is miles away. People with disabilities will be able to perform more types of jobs. Remote patrolling and operation of robots In the security industry, a similar scenario is possible with the remote operation of robots to patrol a distant building. The security professional can control the robots using tools from the metaverse, such as a virtual reality headset, to direct their activities and to respond to situations, all with complete situational awareness. Video enables the operator to ‘see’ what the robot sees and the video view is augmented with various datapoints in a dashboard configuration that is completely customizable to any situation. In the virtual world, a ‘button’ can be created anywhere to control anything. In short, the same technologies that are driving the metaverse will enable more workers to accomplish more jobs from home (or the local coffee shop). Elements of the multiverse You will see more automation, more robotics, and more remote piloting" Rob Martens, Allegion’s Chief Innovation and Design Officer, said “Our labor scarcity problems will drive how successful the metaverse is for technology jobs. You will see more automation, more robotics, and more remote piloting. It’s just another way to interact with each other, with companies, with commerce, with tools – it’s just more immersive.” Elements of the multiverse could also one day replace the expensive need for security operations centers (SOCs). The familiar room full of video monitors and other hardware could be replaced with virtual or augmented reality headsets driven by software. Virtual Reality approach will save on power and space utilization In addition to saving the hardware costs of building the SOC, a Virtual Reality (VR) approach would save on power and space utilization, rent, and the ability to retain employees. VR headsets further enhance the experience of a security center operator by incorporating 3D graphics, video, customizable dashboards, and other elements. And everything is seamless. The greater ability to interact with others in the metaverse promotes collaboration among security professionals and ensures more efficiency. The operators in a security center would not need to be in the same room, if they are in the same ‘virtual space’, thus enabling them to collaborate even more effectively. Traveling to various ‘places’ in the metaverse would enable an operator to ‘visit’ any number of remote physical locations and interface efficiently with the robots, and other systems in various places – all from the comfort of their home office.

Casinos offer several attractive applications for LiDAR, including security and business intelligence. Using laser sensors, the technology can replace the use of surveillance cameras. For casino security, LiDAR can track player movement and provides complete coverage and accuracy that have not been achievable by surveillance cameras. Massive coverage areas can save on costs of sensor deployment versus other technologies. LiDAR and its applications LiDAR is a method for determining ranges by targeting an object with a laser and measuring the time for the reflected light to return to the receiver. LiDAR sensors emit pulsed light waves into a surrounding environment, and the pulses bounce off surrounding objects and return to the sensor. The sensor uses the time it took for each pulse to return to the sensor to calculate the distance it traveled. LiDAR is commonly used in markets such as robotics, terrestrial mapping, autonomous vehicles, and Industrial IoT (Internet of Things). Today, casinos offer a lucrative emerging market for technology. LiDAR tracking enables casino operators to understand the guest path, journey, queue time, count Crowd management LiDAR can contribute to a casino’s guest experience by counting people at doors or in sections of the gaming floor to provide intelligence about crowd size to track occupancy. LiDAR tracking enables casino operators to understand the guest path, journey, queue time, count, and other statistical information by comparing previous time frames to current occupancy levels. This approach allows them to understand digital media advertisement and experience placement. Aid in advertisement “Inside a casino, sensors are deployed like surveillance cameras,” says Gerald Becker, VP of Market Development & Alliances, Quanergy. “But instead of security, they are used to provide anonymous tracking of all people walking through the gaming floor. We can get centimeter-level accuracy of location, direction, and speed of the guests. With this data, we can access the guest journey from the path, dwell count, and several other analytics that provides intelligence to operations and marketing to make better decisions on product placement or advertisement.” Quanergy Quanergy is a U.S.-based company that manufactures its hardware in the USA and develops its 3D perception software in-house. Quanergy has various integrations to third-party technology platforms such as video management systems (VMSs) in security and analytics for operational and business intelligence. Perimeter security Sensors can be mounted to a hotel to monitor for potential objects being thrown off the hotel LiDAR is deployed in both exterior and interior applications. For the exterior of a casino or resort, sensors can be mounted to a hotel to monitor for potential objects being thrown off the hotel, or people in areas where they should not be. For example, they can sense and prevent entrance to rooftops or private areas that are not open to the public. Some clients install sensors throughout the perimeter of private property to safeguard executives and/or a VIP’s place of residence. Flow tracking and queuing capabilities Tracking crowd size can initiate digital signage or other digital experiences throughout the property to route guests to other destinations at the property. It can also help with queue analysis at the reservation/check-in desk or other areas where guests line up to tell operations to open another line to maintain the flow of guests passing. Flow tracking and queuing capabilities help casino operators to understand which games groups of customers frequent and allow for the optimization of customer routing for increased interaction and playtime on the casino floor, quickly impacting the financial performance and return of the casino. No privacy concerns LiDAR provides a point cloud; its millions of little points in a 3D space create the silhouettes of moving or fixed objects The main hurdle right now is market adoption. LiDAR is an emerging technology that is not so widely known for these new use cases, says Becker. “It will take a little bit of time to educate the market on the vast capabilities that can now be realized in 3D beyond traditional IoT sensors that are available now,” he comments. One benefit of LiDAR is that it poses no risk of personally identifiable information (PII) and therefore no privacy concerns. No PII is captured with the technology. Cameras can capture images and transmit them over the network to other applications. However, LiDAR provides a point cloud; its millions of little points in a 3D space create the silhouettes of moving or fixed objects. IoT security strategy There is a lot of interest from surveillance and security to include marketing and operations, says Becker. “LiDAR will become a part of the IoT security strategy for countless casinos soon. It will be common practice to see LiDAR sensors deployed to augment existing security systems and provide more coverage.” Also, the intelligence gained with the accuracy of tracking guests anonymously provides peace of mind to the visitors that they are not being singled out or uniquely tracked but provides valuable data to the casinos that they have not been able to capture before. “This will help them maximize their operations and strategy for years to come,” says Becker.

Case studies

The manufacturing sector is currently facing several challenges. Technological change, pressing environmental issues, and globalization require several adjustments, such as investing in new technologies, conserving resources, and optimizing and securing supply chains. Globally operating companies have to face a changing environment and at the same time manage problems in supply chains. Shifting production back to the domestic market is increasingly an option. This requires not only resilience but also compliance with strict environmental regulations and cost-efficient strategies to make domestic manufacturing competitive. Automation through robotics Moreover, those who want to ensure the competitiveness of domestic production must overcome personnel bottlenecks. Automation through robotics has long since become the driving force here, and artificial intelligence (AI) is increasingly taking on a key role. This technology is developing just as rapidly as the pressure for automation is increasing. To map production processes in one's own company with AI, the simplest possible AI integration and the shortening of training phases are already decisive factors. AI-based solution The easy-to-integrate system consists of a module for robot arms, a computing unit with pre-installed intelligent software This is where British start-up Cambrian Robotics Limited comes in with a fully AI-based solution for various robotics applications in manufacturing. It takes over fast bin picking or pick-and-place, the exact feeding of parts for machines as well as different work steps in material handling, for the benefit of more efficiency in assembly tasks or warehouse logistics. The easy-to-integrate system consists of a module for robot arms, a computing unit with pre-installed intelligent software, and a camera module, each equipped in series with two uEye+ XCP cameras from IDS. Self-learning software "The task of the cameras is to take a picture of the area with the objects to be handled. Based on the recordings, the software can analyze the scene and recognize where exactly the objects are located," explains Miika Satori, founder and CEO of Cambrian Robotics. Further processing of the images is carried out with the help of the heart of Cambrian Vision, a specially developed, self-learning software for predicting the position of the parts as well as their pick points. This takes care of the image matching on an AI basis so that no classic 3D point cloud is needed. GPU (Graphics Processing Unit) The AI models for part recognition and communication with the robot are controlled by a powerful GPU Based on simulated data, the AI learns independently and locates the removal points and parts extremely precisely. The AI models for part recognition and communication with the robot are controlled by a powerful GPU (Graphics Processing Unit). The software learns quickly, "With the Cambrian software package, pick points for new parts can be defined and the application configured within just two to five minutes," emphasizes start-up founder Satori. uEye XCP industrial cameras The associated camera module is equipped with two space-saving uEye XCP industrial cameras. "The two IDS cameras provide images of the object scene from different viewing angles according to the stereo vision principle. The challenge is to determine the position of the part to be gripped as accurately as possible from these images. This is again the task of AI," says Miika Satori. CAD applications for 3D Standard CAD applications for 3D bin picking often use structured light or sensors to do this" The combination of image acquisition, AI models, and special image processing makes it possible to determine recording points and positions particularly precisely. "Standard CAD applications for 3D bin picking often use structured light or sensors to do this, projecting something onto the environment, creating a point cloud, and then trying to find the part in it. Cambrian uses only two standard IDS industrial cameras instead of a 3D camera." Precise vision With an accuracy of less than one millimeter, Cambrian Vision is also much more precise than competing systems. "The system reliably detects a wide range of parts, including shiny, reflective, or transparent components, where conventional machine vision systems often reach their limits. At the same time, it remains robust against external light conditions," says Miika Satori, describing the special requirements for the cameras, which are an elementary part of the solution. 170 milliseconds inference speed The one-shot system is currently one of the fastest AI image recognition systems on the market "It's also super-fast, with an inference speed of less than 170 milliseconds, whereas it often takes more than 1000 milliseconds for comparable solutions." The fast calculation time allows cycle times of two to three seconds in a bin-picking setting. "This ensures efficient, precise, and accurate execution in a single pass," Miika Satori underlines. This makes the One-Shot system currently one of the fastest AI image recognition systems on the market. This is made possible not least by the SuperSpeed USB 5 Gbps cameras, which reliably deliver high-resolution data for detailed image evaluations in any environment, explicitly in applications with low ambient light or changing light conditions. Back Side Illumination pixel technology Due to BSI ("Back Side Illumination") pixel technology, the integrated sensor (1/2.5" 5.04 MPixel rolling shutter CMOS sensor on semi AR0521) offers stable low-light performance as well as high sensitivity in the NIR (near infrared) range so that the uEye XCPs deliver high-quality images in almost any lighting situation with low pixel noise at the same time. With its compact, lightweight full housing (29 x 29 x 17 millimeters, 61 grams) and screwable USB Micro-B connector, the USB3 XCP is particularly suitable for use in combination with robots and cobots in the field of automation. XCP cameras XCP cameras can be easily integrated into any image processing system and can be used with any suitable software Due to USB3 and Vision Standard compatibility (U3V / GenICam), the XCP cameras can be easily integrated into any image processing system and can be used with any suitable software. The simple integration via the standard interface is particularly advantageous for Miika Satori, "Depending on the customer's requirements, we use other IDS cameras in our system. The standardized interface enables rapid deployment of a wide variety of uEye models." Custom Cambrian Vision solutions Compatible with popular lenses, a wide range of cameras from the IDS portfolio can be used as eyes for custom Cambrian Vision solutions, helping to maximize production performance.The top speed, the particularly high light insensitivity, and the wide component bandwidth that the system achieves due to the powerful IDS cameras and intelligent software make it particularly interesting for automation tasks in the production environment. Intelligent 3D vision system They conserve resources and save costs by operating more efficiently, competitively, and sustainably Another key to efficiency lies in the uncomplicated integration of Cambrian Vision. The intelligent 3D vision system is ready for immediate use without any real robot training, a remarkable acceleration compared to conventional methods. Companies can therefore quickly reap the benefits of automation: They conserve resources and save costs by operating more efficiently, competitively, and sustainably, while improving the quality of their products and the safety of their employees. AI in robotics "The use of AI in robotics is still in its infancy," says Miika Satori. Due to the growing demand, the development in the field of image processing with AI will be further advanced, cameras with higher data rates and faster and larger sensors will come onto the market, as well as further price-optimized models with reliable basic functions. Smaller and more affordable By using AI-powered robots for mundane and repetitive tasks, human resources can be redirected "Industrial cameras are getting smaller and more affordable. This will enable even more applications. Our vision is to give robots capabilities on the same level as humans." By using AI-powered robots for mundane and repetitive tasks, human resources can be redirected to more creative, productive, and valuable tasks. Camera uEye XCP - the industry's smallest housing camera with a C-mount. Model used: U3-3680XCP Camera family: uEye XCP Client By combining robotics and artificial intelligence, Cambrian Robotics is developing a productive tool that replaces human hands in the manufacturing of products. With the help of intelligent automation, costs are to be reduced and people are to be given more time for meaningful tasks. Cambrian Vision is a fully AI-based solution for various robotics applications in manufacturing such as bin picking, assembly, feature recognition and localization, pick and place, placement, wire harness, and cable assembly.

Robots do monotonous workflows and less pleasant, repetitive tasks with brilliance. Combined with image processing, they become “seeing” and reliable supporters of humans. They are used in quality assurance to check components, help with the assembly and positioning of components, detect errors and deviations in production processes, and thus increase the efficiency of entire production lines. An automobile manufacturer is taking advantage of this to improve the cycle time of its press lines. Together with the latter, VMT Vision Machine Technic Bildverarbeitungssysteme GmbH from Mannheim developed the robot-based 3D measuring system FrameSense for the fully automatic loading and unloading of containers. Pressed parts are thus safely and precisely inserted into or removed from containers. Four Ensenso 3D cameras from IDS Imaging Development Systems GmbH provide the basic data and thus the platform for process automation. Application The actual workflow that FrameSense is designed to automate is part of many manufacturing operations. A component comes out of a machine-here a press- and runs on a conveyor belt to a container. There it is stacked. As soon as the container is full, it is transported to the next production step, e.g., assembly into a vehicle. All these tasks are now to be taken over by a robot with a vision system-a technological challenge Up to now, employees have been responsible for loading the containers. This actually simple subtask is more complex than one might think at first glance. In addition to the actual insertion process, the first step is to determine the appropriate free space for the part. At the same time, any interfering factors, such as interlocks, must be removed and a general check of the “load box” for any defects must be carried out. All these tasks are now to be taken over by a robot with a vision system-a technological challenge. This is because the containers also come from different manufacturers, are of different types, and thus vary in some cases in their dimensions. Positioning of the components For their fully automatic loading and unloading, the position of several relevant features of the containers must be determined for a so-called multi-vector correction of the robot. The basis is a type, shape, and position check of the respective container. This is the only way to ensure process-reliable and collision-free path guidance of the loading robot. All this has to be integrated into the existing production process. Time delays must be eliminated and the positioning of the components must be accurate to the millimeter. 3D point cloud These point clouds of all four sensors are combined for the subsequent evaluation To counter this, VMT uses four 3D cameras per system. The four sensors each record a part of the entire image field. This can consist of two containers, each measuring approximately 1.5 × 2 × 1.5 meters (D × W × H). Two of the cameras focus on one container. This results in data from two perspectives each for a higher information quality of the 3D point cloud. These point clouds of all four sensors are combined for the subsequent evaluation. In the process, registrations of relevant features of the container take place in Regions of Interest (ROIs) of the total point cloud. Interference contours Registration is the exact positioning of a feature using a model in all six degrees of freedom. In other ROIs, interference contours are searched for which could lead to collisions during loading. Finally, the overall picture is compared with a stored reference model. In this way, the containers can be simultaneously checked for their condition and position in a fully automated manner. Even deformed or slanted containers can be processed. All this information is also recorded for use in a quality management system where the condition of all containers can be traced. The calibration as well as the consolidation of the measurement data and their subsequent evaluation are carried out in a separate IPC (industrial computer) with screen visualization, operating elements, and connection to the respective robot control. Image processing solution The entire image processing takes place in the image processing software MSS developed by VMT The main result of the image processing solution is the multi-vector correction. In this way, the robot is adjusted to be able to insert the component at the next possible, suitable deposit position. Secondary results are error messages due to interfering edges or objects in the container that would prevent filling. Damaged containers that are in a generally poor condition can be detected and sorted out with the help of the data. The entire image processing takes place in the image processing software Multi-Sensor Systems (MSS) developed by VMT. FrameSense is designed to be easy to use and can also be converted to other components directly on site. Robust 3D camera system On the camera side, VMT relies on Ensenso 3D cameras-initially on the X36 model. The current expansion stage of FrameSense is equipped with the Ensenso C variant. The reasons for the change are mainly the better projector performance-thanks to a new projection process-as well as a higher recording speed. In addition, the Ensenso C enables a larger measuring volume. This is an important criterion for FrameSense, because the robot can only reach the containers to be filled up to a certain distance. The specifications of the Ensenso C thus correspond exactly to VMT's requirements, as project manager and technology manager Andreas Redekop explains: "High projector performance and resolution together with fast data processing were our main technical criteria when selecting the camera. The installation in a fixed housing was also an advantage.” Ensenso models Housing of a robust 3D camera system meets the requirements of protection class IP65/67 The Ensenso C addresses current challenges in the automation and robotics industry. Compared to other Ensenso models, it provides both 3D and RGB color information. Customers thus benefit from even more meaningful image data. The housing of the robust 3D camera system meets the requirements of protection class IP65/67. It offers a resolution of 5 MP and is available with baselines from current to approx. 455 mm. This means that even large objects can be reliably detected. The camera is quick and easy to use and addresses primarily large-volume applications, e.g., in medical technology, logistics, or factory automation. Outlook By automatically loading and unloading containers and the integrated 3D container inspection, manual workstations can be automated with the help of FrameSense. Against the background of the shortage of skilled workers, the system can thus make an important contribution to process automation in the automotive industry, among others. It meets the prevailing challenges of the industry. Ensenso C provides the crucial basis for data generation and exceeds the requirements of many applications. Lukas Neumann from Product Management sees their added value especially here: “The high projector power and large sensor resolutions are particularly advantageous in the field of intralogistics. Here, high-precision components have to be gripped from a great distance with a large measuring volume.” For other stacking or bin-picking applications in classic logistics, he could imagine a similar camera with high projector power but lower resolution and fast recording. So nothing stands in the way of further developments and automation solutions in conjunction with "seeing" robots.

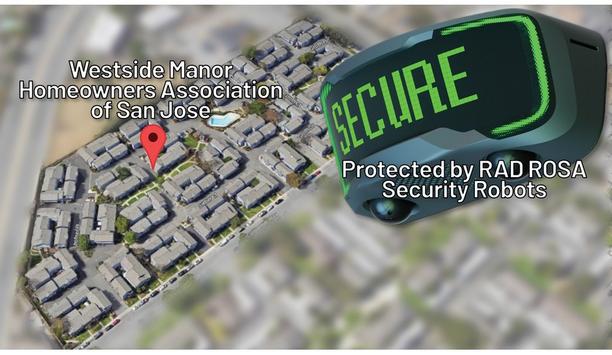

Artificial Intelligence Technology Solutions, Inc., a pioneer in AI-driven security and productivity solutions along with its wholly owned subsidiary, Robotic Assistance Devices, Inc. (RAD), published a case study reviewing the overwhelmingly positive results from the successful deployment of multiple ROSA security robots at a multi-family community in San Jose, California. Innovative security technology The case study titled "From Break-Ins to Breakthroughs: How a Multiple RAD ROSA System Reshaped an HOA Community’s Security" highlights the transformation of Westside Manor Homeowners Association from a vulnerable community plagued by often dangerous break-ins to a secure environment through the adoption of RAD’s innovative security technology. Struggling with limited funds and frustrated with previously ineffective measures Struggling with limited funds and frustrated with previously ineffective measures, the HOA partnered with EPIC Security Works, a RAD-authorized dealer, to deploy multiple ROSA devices equipped with AI analytics, lights, audio, and visual messaging. This innovative approach led to a significant reduction in break-ins, effectively transforming the community's security landscape. Author's quote “This case study summarizes the detailed and coordinated efforts that RAD, its dealers, remote monitoring partners, property managers, and end users perform as we successfully disrupt the security and #proptech marketplaces,” said Steve Reinharz, CEO of AITX and RAD. “I am so proud of this winning combination where everything and everybody worked together enabling a safe and more secure environment for the community’s residents.” RAD’s software suite notification ROSA is a multiple award-winning, compact, self-contained, portable, security and communication solution that can be deployed in about 15 minutes. Like other RAD solutions, it only requires power as it includes all necessary communications hardware. ROSA’s AI-driven security analytics include human, firearm, vehicle detection, license plate recognition, responsive digital signage and audio messaging, and complete integration with RAD’s software suite notification and autonomous response library. RAD has published four Case Studies detailing how ROSA has helped eliminate instances of theft Two-way communication is optimized for cellular, including live video from ROSA’s dual high-resolution, full-color, always-on cameras. RAD has published four Case Studies detailing how ROSA has helped eliminate instances of theft, trespassing and loitering at multi-family communities, car rental locations, and construction sites across the country. RAD solutions AITX, through its subsidiary, Robotic Assistance Devices, Inc. (RAD), is redefining the $25 billion (US) security and guarding services industry through its broad lineup of innovative, AI-driven Solutions-as-a-Service business model. RAD solutions are specifically designed to provide cost savings to businesses of between 35% and 80% when compared to the industry’s existing and costly manned security guarding and monitoring model. RAD delivers this tremendous cost savings via a suite of stationary and mobile robotic solutions that complement, and at times, directly replace the need for human personnel in environments better suited for machines. All RAD technologies, AI-based analytics, and software platforms are developed in-house.