Qognify’s security management systems compile information from a variety of sensors to provide situational awareness, and now they can leverage the benefit of a different kind of sensor, what the company calls the “human sensor.”

Employees see and hear a lot of information during their work day, and some of it has an impact on security. Now that information can become part of an integrated security system, reported by trusted employees through a smart phone app.

Qognify’s Extend adds new capabilities to the company’s existing Situator physical security information management (PSIM) and VisionHub video management; it’s a new element in Qognify’s interconnected product portfolio.

Using Smartphones To Report Incidents

The Extend Mobile Solutions Suite enables systems to leverage the “human sensor” by equipping employees (or students in a campus environment) with an easy-to-use app on their smart phones. If a user sees or hears something, they can initiate an “incident” through the smart phone app’s “See It Send It” function.

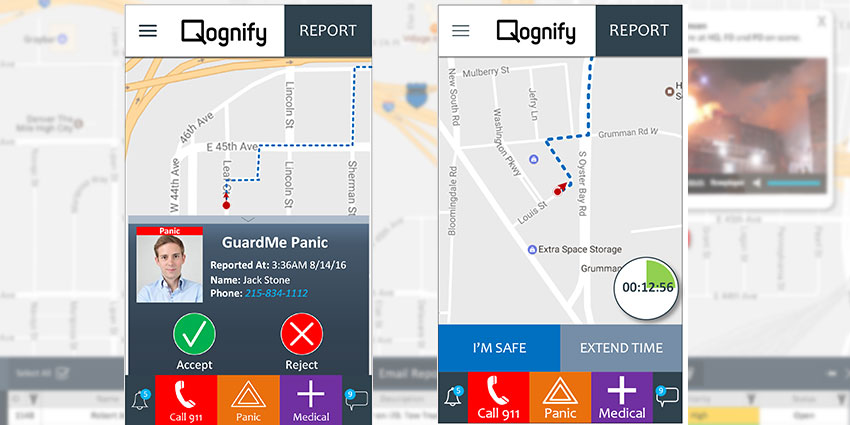

The app can also provide protection for a student or employee with a GuardMe function that enables a security operations center to hear an employee, see their location and monitor their progress from Point A to Point B, reporting any distress situations along the way. The system also provides mass notification capabilities (using smart phones) without the installation of any software or hardware.

“The best sensor is the human sensor,” says Dharmesh V. Patel, Qognify’s global business initiatives vice president. “At an airport, you may have 20,000 employees, and they each know if something is awry because they work there all day long.” A reported incident might not even be a security issue; it could be something as simple as a slippery floor.

Live Video Broadcasting

Qognify Extend, which is the company’s rebranding of a system “powered by CloudScann,” captures the data from human sensors and allows it to be brought into the Qognify platform. Because smart phones are equipped with high-resolution megapixel cameras, Extend also enables the addition of 20,000 video cameras (and audio), all tied into a command center.

|

| The app can also provide protection for students or employees with the GuardMe function |

“It would take years and millions of dollars to [add that many cameras] any other way,” says Patel. “And the information is coming from your employees, which is a trusted source. Actionable information becomes part of the workflow.” In case of an emergency, a smart phone can be used to stream live video to a command center, a capability called Live Video Broadcasting, even as a control room operator dispatches an officer to help.

Qognify Visual Intelligence Desktop Application

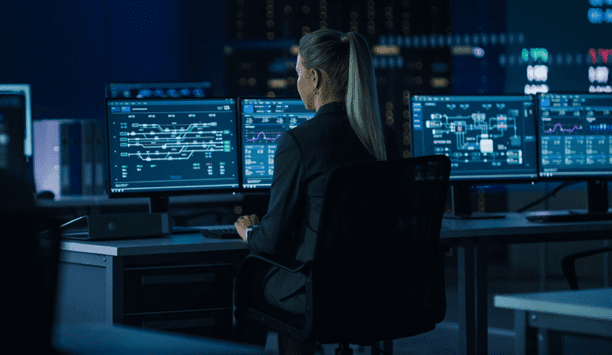

Information from Extend mobile apps reports to the Qognify Visual Intelligence Command Center (VICC), a cloud-based desktop application that collects and aggregates information and presents it on a map to enable control room operators to have complete situational awareness. The live, global system compiles data from open source systems anywhere in the world.

If you type in New York City, for example, the interface takes you to a live map that shows where live cameras are viewing the Lincoln Tunnel. Various “levels” of information provide real-time routing and traffic, weather information, etc. In addition to information from mobile apps, the system can bring in views from any public source cameras, including tapping into cameras mounted on drones hovering over the scene of an emergency.

Fast Response To Incidents

Finding information on any incident using VICC is like conducting a Google search. The system can also find the locations of people (employees or students) based on their smart phone signals.

Availability of real-time video from a trusted source in an emergency helps to shift the mission of a video system from reactive after the fact to a real-time response, says Patel. And the cameras providing the video are not mounted on the ceiling but are closer to the action (held by a person on the scene). Because smart phones provide location data, the command center knows the location of an incident and can trigger a response.

“I know where it is, I can say ‘who’s my closest responder?’” says Patel. “We can see this whole situation in the command center – not just visualize it but dispatch a response.”

From facial recognition to LiDAR, explore the innovations redefining gaming surveillance